Abstract

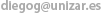

Motion blur is a frequent requirement for the rendering of high-quality animated images. However, the computational resources involved are usually higher than those for images that have not been temporally antialiased. In this article we study the influence of high-level properties such as object material and speed, shutter time, and antialiasing level. Based on scenes containing variations of these parameters, we design different psychophysical experiments to determine how influential they are in the perception of image quality.

This work gives insights on the effects these parameters have and exposes certain situations where motion blurred stimuli may be indistinguishable from a gold standard. As an immediate practical application, images of similar quality can be produced while the computing requirements are reduced.

Algorithmic efforts have traditionally been focused on finding new improved methods to alleviate sampling artifacts by steering computation to the most important dimensions of the rendering equation. Concurrently, rendering algorithms can take advantage of certain perceptual limits to simplify and optimize computations. To our knowledge, none of them has identified nor used these limits in the rendering of motion blur. This work can be considered a first step in that direction.

Downloads

- PDF [1.5 MB]

- MOV [4.9 MB]