News

- 12 October 2014: we presented our paper at Pacific Graphics 2014; check the slides here!

- 28 February 2014: web launched!

Abstract

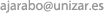

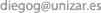

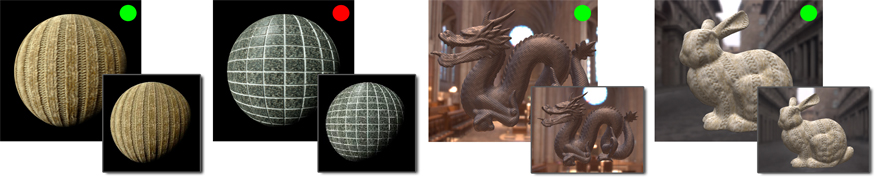

The BTF data structure was a breakthrough for appearance modeling in computer graphics. More research is needed though to make BTFs practical in rendering applications. We present the first systematic study of the effects of approximate filtering on the appearance of BTFs, by exploring the spatial, angular and temporal domains over a varied set of stimuli. We perform our initial experiments on simple geometry and lighting, and verify our observations on more complex settings. We consider multi-dimensional filtering versus conventional mipmapping, and find that multi-dimensional filtering produces superior results. We examine the trade off between under- and oversampling, and find that different filtering strategies can be applied in each domain, while maintaining visual equivalence with respect to a ground truth. For example, we find that preserving contrast is more important in static than dynamic images, indicating greater levels of spatial filtering are possible for animations. We find that filtering can be performed more aggressively in the angular domain than in the spatial. Additionally, we find that high-level visual descriptors of the BTF are linked to the perceptual performance of pre-filtered approximations. In turn, some of these high-level descriptors correlate with low level statistics of the BTF. We show six different practical applications of applying our findings to improving filtering, rendering and compression strategies.

Downloads

Links

- CS Digital Library: [Vol. 31(2)] [Paper]

Bibtex

Acknowledgements

We want to thank the participants of the experiments, Carlos Aliaga for the scene in Figure 15, Susana Castillo, Su Xue and Yitzchak Lockerman for their help setting up the experiments, and the members of the Graphics and Imaging Lab for valuable discussions and feedback. This research has been funded by the European Commission, Seventh Framework Programme, through projects GOLEM (Marie Curie IAPP, grant: 251415) and VERVE (ICT, grant: 288914), the Spanish Ministry of Science and Technology (TIN2010-21543), and the National Science Foundation (grants: IIS-1064412 and IIS-1218515). Hongzhi Wu was additionally supported by NSF China (No. 61303135) and the Fundamental Research Fund for the Central Universities (No. 2013QNA5011).