News

- 19 September 2014: slides from our Siggraph 2014 presentation uploaded!

- 06 Aug 2014: code and datasets available (see Downloads).

- 19 May 2014: preprint available (see Downloads).

- 24 April 2014: web launched!

Abstract

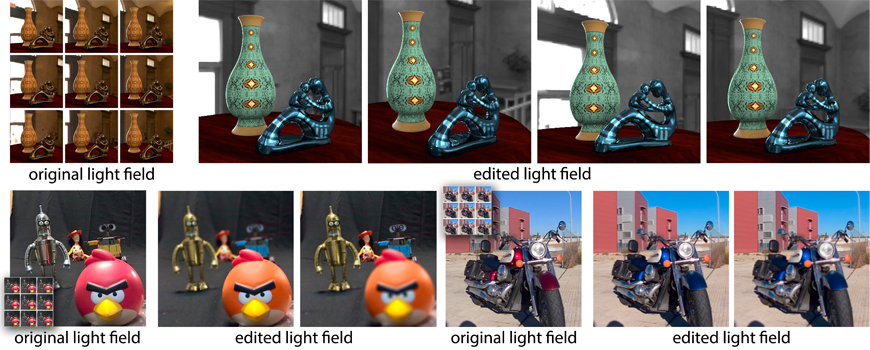

We present a thorough study to evaluate different light field editing interfaces, tools and workflows from a user perspective. This is of special relevance given the multidimensional nature of light fields, which may make common image editing tasks become complex in light field space. We additionally investigate the potential benefits of using depth information when editing, and the limitations imposed by imperfect depth reconstruction using current techniques. We perform two different experiments, collecting both objective and subjective data from a varied number of point-based editing tasks of increasing complexity: In the first experiment, we rely on perfect depth from synthetic light fields, and focus on simple edits. This allows us to gain basic insight on light field editing, and to design a more advanced editing interface. This is then used in the second experiment, employing real light fields with imperfect reconstructed depth, and covering more advanced editing tasks. Our study shows that users can edit light fields with our tested interface and tools, even in the presence of imperfect depth. They follow different workflows depending on the task at hand, mostly relying on a combination of different depth cues. Last, we confirm our findings by asking a set of artists to freely edit both real and synthetic light fields.

Downloads

- PDF [43.8 MB]

- Supplementary Material [36.5 MB]

- Main Video [112 MB]

- Additional Videos: Experiment 1 [73.2 MB], Experiment 2 [109 MB]

- Slides [PPTX 83.2 MB] [PDF 4.4 MB]

- User interface and Tools

- Dataset

Bibtex

Related

- 2015: Light Field Editing Based on Reparameterization

- 2014: Favored Workflows in Light Field Editing

- 2011: Efficient Propagation of Light Field Edits

Acknowledgements

We want to thank the reviewers for their insightful comments, the participants of the experiments (in particular Sara Lopez, Patrick Moosebrugger, Carlos Aliaga and Jose I. Echevarria), Chia-Kai Liang for his help with the Lytro SDK, the models in the captured light fields (Paz Hernando, Julio Marco, Laura Serra and Raul Buisan), and the members of the Graphics and Imaging Lab and the REVES Group for valuable discussions and feedback. We also want to thank the authors of [Kim et al. 2013a] and [Wanner and Goldluecke 2012] for sharing their light fields, Guillermo Leal Llaguno for the San Miguel scene, Emmanuelle Chapoulie and the AIM@SHAPE project for vase, and Infinite Realities for the head data. This research has been partially funded by the European Commission, 7th Framework Programme, through projects GOLEM (Marie Curie IAPP, grant: 251415), VERVE (ICT, grant: 288914) and TROPIC (ICT, grant: 318784 ), the Spanish Ministry of Science and Technology (TIN2010-21543), project TAMA, Intel Corporation and Adobe. Belen Masia has additionally been supported by an Nvidia Graduate Fellowship.